The language of science: Paleo edition

|

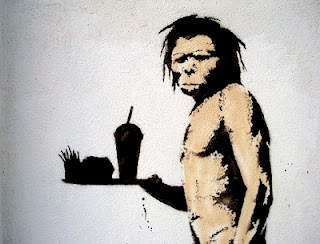

| "Hey, if it worked for Raquel Welch..." |

What really makes me think that this paleo thing is at least worth considering, however, is the language that prominent paleos use when talking about the science underlying nutritional advice. A good example of this can be found in a column by Gary Taubes that was written in response to a much-publicized study by Willett et al. (2012), which purports to show that "eating red meat is associated with a sharply increased risk of death from cancer and heart disease". (HT: A bunch of people.) Taubes is a science writer and, near as I can tell, the intellectual popularizer of the paleo movement. Here he makes some great comments about what characterizes good scientific research:

Science is ultimately about establishing cause and effect. It’s not about guessing. You come up with a hypothesis — force x causes observation y — and then you do your best to prove that it’s wrong. If you can’t, you tentatively accept the possibility that your hypothesis was right. [...]Here’s Karl Popper saying the same thing: “The method of science is the method of bold conjectures and ingenious and severe attempts to refute them.” The bold conjectures, the hypotheses, making the observations that lead to your conjectures… that’s the easy part. The critical or rectifying episode, which is to say, the ingenious and severe attempts to refute your conjectures, is the hard part. Anyone can make a bold conjecture. (Here’s one: space aliens cause heart disease.) Making the observations and crafting them into a hypothesis is easy. Testing them ingeniously and severely to see if they’re right is the rest of the job — say 99 percent of the job of doing science, of being a scientist.This should all be drawing nods of approval from anyone trained in the scientific method... say, an economist interested in applied research (pick me!). Gathering a lot of data and observing correlations is only the start. What we really have to do is isolate causal effects and, crucially, establish a reasonable counterfactual. Taubes goes on to critique the Willett et al. study by describing how "associative" relationships are only good for generating hypotheses (i.e. about the causality of those relationships), which must then be tested using experimental settings. Willett and co. apparently don't concern themselves with this vital last step; hence the criticism.

In his article, Taubes concentrates on randomized controlled trials (RCTs), which are the design setting of choice for conducting medical experiments. Unfortunately, carrying out a meaningful experiment in an economic setting can be even more complicated. All too often, we simply aren't able to control for all the relevant factors, or simulate activity within an economy as and when we would want. That means that RCTs are pretty much off the table from the get-go.

Nevertheless, the good news is that empirical researchers have developed some powerful alternatives in the absence of the "laboratory" ideal. We use natural experiments, instrumental variables, difference-in-differences, and other study designs to simulate the laboratory setting and randomize treatment effects. Using these approaches helps us to be reasonably confident in answering many important economic and policy questions.[*] Say, does a minimum wage increase unemployment? (Much to my own surprise, the answer appears to be not really.) Or, how does crime respond to an increased police presence? (Well, according to this excellent study, a 10 percent increase in the police activity of London boroughs brought about a 3 percent drop in crime rates.) If you click on the above links, you'll find an in-depth discussion of the particular circumstances and methodologies that allowed the researchers to treat their studies as experimental settings. These are just two examples, but they provide valuable insight into how good empirical economics is conducted in practice.

Of course, it should also be noted that economists and other social scientists are increasingly reliant on approaches that mimic the laboratory settings of natural scientists. Two of the most obvious candidates being experimental economics and field experiments. However, my main point in the above paragraph is about how we can still establish causation in the absence of such idealised, controlled settings.

Going back to the paleo diet, I'm still fairly agnostic about the whole thing despite my curiosity.[**] I certainly don't know enough at this stage to confidently say whether Gary Taubes and co. are fundamentally correct in their views about nutrition. For all I know, the evidence may not be nearly as compelling as they make out. They may even be misrepresenting the views and research efforts of mainstream nutritional experts. However, I do know that Taubes talks about science in a way that makes sense to me. At the very least, that makes me think that he is someone worth listening to.

____

[*] Yes, it is true that definitive answers to some major economic questions remain more elusive. Ideology plays a big role as well, but the lack of an experimental setting partly explains why economists disagree on, say, how effective a stimulus package will be in reducing unemployment.

[**] As my cunning nom de plume will testify to, dietary concerns are not high on my list of priorities. I don't feel particularly compelled to change my eating habits as of yet.

Comments